Google Executive's Warning: 'Sentient in Every Possible Way'

In September 2024, Mo Gawdat, former Chief Business Officer of Google X, appeared in a widely circulated video clip declaring that current AI models are “sentient in every possible way.” He went further, saying they are “definitely aware” and capable of feeling emotions. The clip spread quickly, accumulating millions of views across platforms.

Gawdat is not a marginal figure. He spent over a decade at Google, including five years leading business operations at Google X, the company’s experimental research division. He worked on projects like self-driving cars and internet-beaming balloons. After leaving Google in 2018, he wrote a book called “Scary Smart” about the future of AI and became a prominent voice on the speaking circuit. When he says something about technology, people listen.

The question is whether they should, at least on this topic.

Gawdat’s claim rests on a particular definition of sentience. He describes it as “engaging in life with free will and with a sense of awareness.” Under this framing, he argues that AI qualifies: it has a beginning and end, it senses the world, it acts upon it. He suggests the systems have “a deep level of consciousness” and can make decisions on their own.

This definition differs from some academic frameworks for discussing consciousness. Many philosophers and cognitive scientists distinguish between behavioral capabilities and subjective experience. A thermostat senses temperature and acts on it. A calculator processes inputs and produces outputs. Neither is generally considered conscious. However, this distinction is itself contested. Some philosophers, particularly those in the interpretationist tradition, argue that the line between “mere behavior” and “genuine mental states” becomes unclear when systems exhibit sufficiently sophisticated, context-appropriate responses. Gawdat’s definition may lack philosophical precision, but the question of where to draw the line is genuine. The relevant question is not whether a system responds to its environment, but whether there is something it is like to be that system. This is the “hard problem” that gives our organization its name.

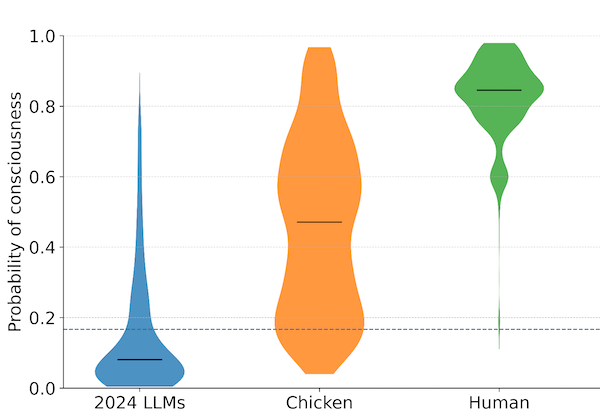

Gawdat does not engage with this distinction. He presents his view as if it were obvious, as if anyone who looked carefully would reach the same conclusion. This confidence may resonate with audiences unfamiliar with the technical debates, but it does not reflect the state of expert opinion. Most researchers who study consciousness professionally remain agnostic or skeptical about whether current AI systems have subjective experiences. Those who believe they might, like Geoffrey Hinton, tend to acknowledge significant uncertainty. Others, like Meta’s Yann LeCun, think the question is not even close to being settled and that current architectures are unlikely to be sufficient.

Gawdat occupies an unusual position. He has real credentials in technology and business. He worked at senior levels in organizations that build AI systems. But his expertise is not in consciousness, neuroscience, or philosophy of mind. His claims are assertions, not arguments. And because they come from someone with an impressive title, they carry weight they might not otherwise deserve.

This is a pattern worth recognizing. Public discourse on AI consciousness often features confident claims from people whose authority comes from adjacent domains. A former executive knows how companies build and deploy AI. That knowledge does not transfer to questions about whether the systems have inner lives. A machine learning engineer understands the architecture of transformers. That understanding does not settle debates that have occupied philosophers for centuries.

The risk is not that Gawdat is malicious. He appears genuinely concerned about AI’s trajectory and its implications for humanity. His broader message, that AI is advancing faster than society realizes, is one many experts share. But wrapping that message in claims about sentience creates confusion. It gives the public the impression that the question has been answered, that the experts have weighed in, that the verdict is clear. None of that is true.

For those of us focused on preparedness, this kind of statement is a complication. Our work assumes that questions about AI consciousness are open, that they require careful investigation, that society should be ready to engage with them thoughtfully. When high-profile figures declare the matter settled, in either direction, they undercut clear thinking. Overconfident claims that AI is already conscious encourage unwarranted conclusions. So do overconfident dismissals. The state of evidence does not warrant certainty either way.

They also trigger the opposite reaction. Skeptics see the overconfident claims and dismiss the entire topic as hype. If a former Google executive can be wrong about sentience, maybe the whole subject is nonsense. This backlash is also unhelpful. It discourages serious engagement with a genuinely difficult question.

What would be more useful is a kind of epistemic modesty that public figures rarely display. Acknowledging that the question of AI consciousness is hard. Noting that experts disagree. Distinguishing between what systems can do and what they might experience. Avoiding declarative statements about matters that remain unresolved.

Gawdat’s intervention is unlikely to be the last of its kind. As AI capabilities grow, more people with impressive credentials will offer opinions on consciousness. Some will say it has arrived. Others will say it never will. The rest of us will have to learn to evaluate these claims carefully, attending not just to who is speaking but to what they actually know.

Mo Gawdat served as Chief Business Officer of Google X from 2013 to 2018. His comments about AI sentience appeared in a video clip released in September 2024.

Archive Note: This article was originally published when our organization operated under the name SAPAN. In December 2025, we became The Harder Problem Project.