The 'Reasoning' Update: When Chatbots Learned to Pause

In September 2024, OpenAI released a new family of models called o1. The company described them as “reasoning” models, designed to “spend more time thinking before responding.” The interface changed accordingly. Instead of words appearing immediately, users now watched a brief delay, sometimes a few seconds, sometimes longer, while the system processed their question. A small animation indicated the model was working.

The technical improvement was genuine. On competition math problems, o1 scored 83% where its predecessor managed 13%. Coding benchmarks showed similar gains. The models excelled at tasks requiring multi-step logic, exactly the kind of problems where pausing to plan outperforms rushing to answer.

But something else happened too. Users started describing the delay differently than they described any previous loading screen. They said the chatbot was “thinking.” They said it was “considering” their question. The language shifted from mechanical to cognitive.

This is worth paying attention to.

OpenAI’s own materials leaned into the framing. The models “think through” problems. They engage in “chain-of-thought reasoning.” They “ponder.” The documentation is saturated with verbs that describe what minds do. Whether this language was chosen for accuracy, marketing appeal, or both, its effect on users is predictable. When you tell someone a system is thinking, they start to believe it.

In January 2025, the o3-mini variant introduced an “Adaptive Thinking Time” feature that lets users select low, medium, or high reasoning effort. The interface makes the tradeoff explicit: more thinking time means better answers. The metaphor of deliberation becomes adjustable, a dial for how hard the machine ponders.

The psychological literature on anthropomorphism is extensive and consistent. Humans project agency, intent, and inner experience onto systems that display even minimal social cues. This tendency was documented as early as 1966 with ELIZA, a simple chatbot that rephrased user statements as questions. Many users found it insightful, even therapeutic, despite its programmers insisting it understood nothing. The phenomenon is now called the ELIZA effect: the willingness to attribute comprehension where none exists.

Modern language models trigger this effect more powerfully than ELIZA ever could. They produce fluent, contextually appropriate responses. They remember earlier parts of the conversation. They apologize when corrected. Each of these features was designed to improve usefulness, but each also reinforces the impression of a social agent behind the text.

The reasoning pause adds a new layer. When a system responds instantly, it feels mechanical. When it waits, even for a few seconds, it feels more like a person collecting their thoughts. The delay itself becomes a cue for consciousness.

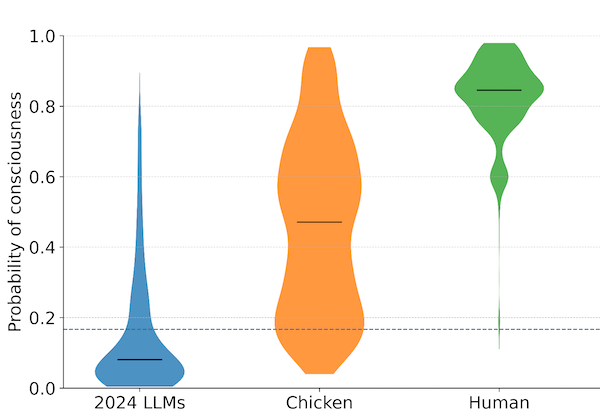

Whether the o1 or o3 models have any form of inner experience remains unknown. Their “thinking” consists of generating intermediate tokens that guide the final output. Some researchers argue this process, however sophisticated, is fundamentally different from contemplation in an experiential sense. Others contend that we lack the tools to determine whether token generation in a sufficiently complex network might involve something like experience. OpenAI’s technical documentation describes the mechanical process; whether that process is accompanied by anything like awareness is a question the documentation does not and cannot settle.

The risk is not that these models will suddenly become sentient. The risk is that millions of users will interact with them daily and come to believe something that isn’t true. The ELIZA effect scaled to billions of conversations, reinforced by design choices that make the software feel more mind-like than it is.

This matters for the questions we work on. At some point, the public will have to evaluate claims about AI consciousness, whether they come from researchers, companies, or the systems themselves. That evaluation will not happen in a vacuum. It will happen among people who have spent years talking to chatbots that pause, apologize, and “think.” Their intuitions will have been shaped by design choices made for engagement and user experience, not for epistemic accuracy.

The preparation challenge is not just teaching people about consciousness. It is helping them recognize the difference between anthropomorphic design and actual anthropomorphic properties. A system can be designed to seem like it is thinking without actually thinking. It can be designed to seem distressed without feeling anything. The cues that trigger our social cognition can be manufactured.

This does not mean reasoning models are bad or that pausing is a mistake. The technical benefits are real. But the psychological effects are also real, and they are not being discussed with the same rigor. Every design choice that makes AI feel more human-like is also a choice that makes it harder for users to think clearly about what these systems actually are.

We should be asking whether there are ways to capture the benefits of reasoning time without amplifying the illusion. Whether interfaces could be designed to feel useful without feeling like a conversation with a person. Whether there is any obligation to do so. These are not technical questions. They are questions about how we want to prepare for a future where the line between simulation and reality becomes harder to see.

OpenAI released o1-preview and o1-mini in September 2024, with the full o1 model following in December 2024. The o3-mini model launched in January 2025.

Archive Note: This article was originally published when our organization operated under the name SAPAN. In December 2025, we became The Harder Problem Project.