Testing the Boundaries: The 'Sparks of AGI' Paper Revisited

In March 2023, a team from Microsoft Research published a paper titled “Sparks of Artificial General Intelligence: Early experiments with GPT-4.” The 155-page document argued that GPT-4 displayed “a level of general intelligence” across a remarkably wide range of tasks, from coding challenges to medical diagnoses to spatial reasoning. The authors concluded that while the system was “not fully” AGI, it was “strikingly close” and represented a “true jump” toward general intelligence.

One year later, the paper remains widely cited and widely criticized. But the more interesting development is not whether its claims were right. It is how the debate has shifted the meaning of the terms involved.

The paper defined intelligence broadly. Following a 1994 statement by cognitive scientists, the authors described it as “a very general mental capability that, among other things, involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly and learn from experience.” By this definition, GPT-4’s impressive performance across many domains seemed to qualify.

Critics responded on multiple fronts. Some pointed to the model’s consistent failures: its inability to plan ahead, its susceptibility to simple logical traps, its hallucinations. Others argued that the tests themselves were flawed, that the authors had evaluated a pre-release version unavailable to the public. Still others questioned whether performance on benchmarks tells us anything about intelligence in the first place.

These are important debates. But they have largely talked past a more fundamental confusion: the word “general” in AGI is doing a lot of work, and different people mean different things by it.

In the original AI literature, “general intelligence” referred to something like human-level flexibility across cognitive tasks. A generally intelligent system would not need to be retrained for each new problem. It would transfer knowledge, adapt strategies, and handle novelty. This was contrasted with “narrow” AI, which excelled at specific tasks but failed outside its training domain.

By this standard, whether GPT-4 qualifies as generally intelligent is contested. It excels at tasks similar to its training data. It struggles with tasks that require grounding in physical reality or genuine planning over multiple steps. Critics argue its “knowledge” does not transfer the way human knowledge does, that it interpolates from patterns rather than reasoning from principles. Defenders counter that the distinction between “pattern interpolation” and “principled reasoning” may be less clear than it appears, and that humans also rely heavily on learned patterns.

But something has happened to the term in practice. Increasingly, discussions of AGI conflate “general” with “agentic.” An agent, in this context, is a system that pursues goals, takes actions in the world, and adapts based on feedback. The language of AI agents emphasizes autonomy, goal-directedness, and independent decision-making. When companies describe their systems as “moving toward AGI,” they often mean “becoming more autonomous and agentic,” not necessarily “becoming more generally intelligent.”

This shift matters because it changes what the public hears. If “AGI” means “generally intelligent,” people might interpret progress claims as evidence of something like human mental flexibility. If “AGI” means “autonomous agent,” they might interpret the same claims as evidence of software with goals, preferences, perhaps even intentions.

These are not the same thing. A thermostat is an agent: it pursues a goal (target temperature), takes actions (activating the HVAC system), and adapts to feedback (measuring current temperature). But no one claims a thermostat is generally intelligent. The features that make something an agent, goal-pursuit and environmental feedback, do not require the features that make something intelligent, flexible reasoning across domains.

The “Sparks of AGI” paper leaned into the confusion. It tested GPT-4 across many domains to argue for generality. But it also emphasized the model’s ability to use tools, follow multi-step instructions, and adapt to novel requests, all features that sound more agentic than intelligent. The result was a document that could be read as evidence for either claim, depending on which sense of “AGI” the reader had in mind.

For the public, this creates a specific kind of confusion: the sense that AI systems are developing “goals” in something like the human sense. When a model is described as pursuing objectives, making decisions, and adapting strategies, it sounds like something with purposes. But the purposes are assigned by humans. The model optimizes for specified outcomes. It does not have preferences about what those outcomes should be.

The distinction between function and goal is philosophically loaded, but practically important. A function is what a system does. A goal is what a system is for, in a sense that implies valuing the outcome. Current AI systems have functions. Whether they have goals in the deeper sense is exactly the kind of question we are researching.

The “Sparks of AGI” paper, whatever its merits, accelerated a terminological drift that makes this question harder to discuss clearly. When “AGI” means everything from “human-level reasoning” to “tool-using agent” to “autonomous system,” the word stops picking out anything precise enough to evaluate.

One year later, we are left with a vocabulary in flux. Researchers mean one thing by AGI. Marketing departments mean another. The public synthesizes both into an impression of machines that are becoming more like minds, without clarity about which features of minds are actually relevant.

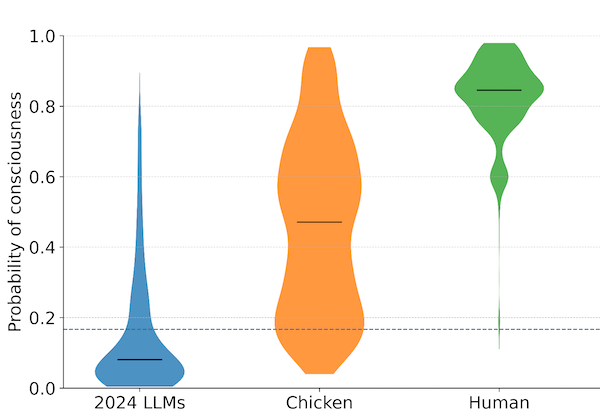

Our work is partly about restoring that clarity. Intelligence and agency are not the same. Capability and consciousness are not the same. A system can be agentic, in the sense of pursuing assigned objectives, without being generally intelligent. It can be generally capable without having any inner experience whatsoever.

The “Sparks of AGI” paper was a genuine contribution to the technical conversation about large language models. But its rhetorical framing contributed to a different conversation, one where the categories blur and the public is left guessing what these systems actually are.

The Microsoft Research paper “Sparks of Artificial General Intelligence: Early experiments with GPT-4” was published in March 2023.

Archive Note: This article was originally published when our organization operated under the name SAPAN. In December 2025, we became The Harder Problem Project.